AI Data Center Cooling Solutions for High-Density Racks

AI and GPU workloads generate far more heat than traditional compute infrastructure. A single rack loaded with current-generation GPU accelerators can draw 40kW to 100kW of power, compared to 5kW to 10kW for a standard server rack. That concentration of heat demands a different approach to airflow management, one that starts at the rack level and scales across the facility.

EziBlank provides the physical layer of airflow control that keeps high-density AI racks within safe operating temperatures: blanking panels, aisle containment, floor tiles, cable grommets, and real-time monitoring software.

Why AI Workloads Change the Cooling Equation

Traditional data centres were built for rows of similar racks drawing similar power. Cooling systems were sized for an average load spread evenly across the floor. AI infrastructure breaks this model. GPU clusters concentrate massive thermal loads in small areas, creating hot spots that overwhelm cooling systems designed for uniform density.

When a row of AI racks draws 10 times the power of the row beside it, the cooling infrastructure has to deliver 10 times the airflow to that specific zone. Any gap in the airflow path, whether an open rack unit, an unsealed cable cutout, or a missing containment panel, allows hot exhaust air to recirculate. The result is rising inlet temperatures, GPU thermal throttling, and reduced training performance.

The physics are straightforward. Seal the gaps, direct the cold air where it is needed, and remove the hot air before it contaminates the cold supply. That is what EziBlank products are built to do.

Blanking Panels for High-Density AI Racks

Every open rack unit in an AI cluster is a leak in the airflow system. At the power densities involved in GPU computing, even a few open U-spaces can raise inlet temperatures by several degrees, enough to trigger throttling on heat-sensitive accelerator cards.

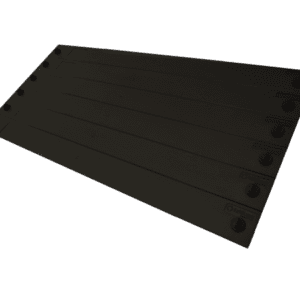

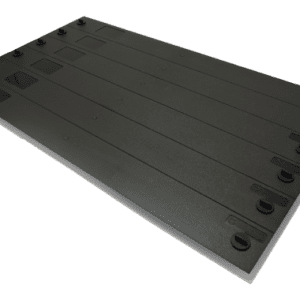

High-density blanking panels from EziBlank seal these gaps with a tool-free, snap-on design that takes seconds to install. The panels are made from flame-retardant ABS plastic (UL94-V0 rated), ship as 6RU modular blocks that snap apart into 1U segments, and fit 19-inch, 21-inch, and 23-inch rack widths.

For AI environments where rack configurations change frequently (nodes added, removed, or repositioned during cluster scaling), reusable blanking panels that install and remove without tools are a practical advantage over metal filler plates that require cage nuts and screwdrivers for every move.

Aisle Containment for GPU Clusters

Blanking panels seal the rack. Aisle containment seals the row. In high-density AI deployments, both are needed.

Hot aisle containment for AI racks prevents GPU exhaust air from mixing with the cold supply. Without containment, hot air rises from the rack tops and flows over into the cold aisle, forcing cooling units to work harder and reducing the effective cooling capacity of the facility.

EziBlank’s containment products include modular wall panels, end-of-row doors, and ceiling baffles that create a physical barrier between the hot and cold airstreams. The modular design allows containment to be built around existing rack layouts without structural modifications to the data centre.

For AI facilities with mixed-density rows (GPU racks alongside storage or networking racks), containment keeps the high-heat exhaust isolated from lower-density equipment that does not need the same volume of cooling air.

shop our range

-

19″ 6RU Universal Blanking Panel

Price range: $186.00 through $200.00 AUD -

21″ 10SU Universal Blanking Panel for ETSI Rack

Price range: $240.00 through $250.00 AUD -

23″ 6RU Universal Blanking Panel

$250.00 AUD -

19” 6RU Standard Blanking Panel

Price range: $145.00 through $186.00 AUD -

New 19″ 6RU Universal Blanking Panel

Floor Tiles and Cable Management

Raised floor data centres rely on pressurised underfloor plenums to deliver cold air through floor tiles. In AI environments, the volume of air needed at each rack position increases significantly. Standard floor tiles may not deliver enough airflow to the highest-density racks.

EziBlank’s directional and high-airflow floor tiles allow operators to control exactly how much cold air reaches each rack position. Directional tiles aim airflow at specific racks or hot spots, while high-airflow tiles increase the volume of air delivered through individual tile positions.

Cable cutouts and floor grommets are another common source of air leakage. KOLDLOK raised floor grommets seal around cable bundles passing through the floor, preventing cold air from escaping before it reaches the racks. In high-density environments where every unit of cooling capacity matters, sealing these leaks recovers airflow that would otherwise be wasted.

Real-Time Monitoring with EkkoSense

Physical airflow products control how air moves. Monitoring software shows you whether it is working.

EkkoSense AI cooling optimization provides real-time thermal visibility across the data centre floor. Wireless sensors placed at rack inlet and exhaust positions feed temperature, humidity, and airflow data into a centralised dashboard. The software uses machine learning to identify cooling inefficiencies, predict hot spots before they develop, and recommend adjustments to cooling set points.

For AI workloads, this level of visibility is especially valuable. GPU clusters generate dynamic heat loads that shift as training jobs start and stop. A rack that draws 80kW during a training run may idle at 10kW between jobs. Real-time monitoring detects these shifts and flags when inlet temperatures approach thermal limits, giving operations teams time to respond before hardware throttles.

EkkoSense also provides PUE tracking, cooling energy analysis, and capacity planning tools that help data centre managers quantify the return on airflow management investments.

A Layered Approach to AI Cooling

No single product solves AI cooling on its own. Effective thermal management in high-density environments requires multiple layers working together:

Blanking panels seal unused rack spaces and stop hot air recirculation at the rack level. Aisle containment separates hot and cold airstreams at the row level. Floor tiles and grommets control how cold air is delivered from the underfloor plenum. Monitoring software provides the data to verify that each layer is performing as intended.

EziBlank supplies all four layers. The products integrate with existing data centre infrastructure and do not require proprietary mounting hardware or specialised installation crews. For non-standard rack sizes or custom containment requirements, EziBlank also offers tailor-made solutions built to specific facility dimensions.

Getting Started

If you are planning an AI deployment, retrofitting an existing facility for GPU workloads, or scaling an existing AI cluster, airflow management should be part of the planning process from day one, not an afterthought when temperatures start climbing.

To discuss your AI cooling requirements, contact the EziBlank team. For help choosing the right blanking panel for your racks, see the product comparison page. For a quick estimate of the energy savings from blanking panel deployment, try the ROI calculator.

Frequently Asked Questions

Do blanking panels make a real difference in high-density AI racks?

Yes. At the power densities typical of GPU computing (40kW to 100kW per rack), even a few open U-spaces can raise inlet temperatures enough to trigger thermal throttling. Blanking panels seal these gaps and keep cold air flowing through the equipment rather than bypassing it.

Can EziBlank products handle racks above 30kW?

EziBlank blanking panels, containment systems, and floor tiles are passive airflow management products. They control the path of airflow, not the volume. As long as the cooling infrastructure delivers sufficient cold air, EziBlank products direct that air where it needs to go, regardless of rack density.

What monitoring does EziBlank offer for AI environments?

EkkoSense provides real-time thermal monitoring with wireless sensors, machine learning analytics, and automated cooling recommendations. It is purpose-built for dynamic workloads where heat loads shift frequently, which is common in AI training environments.

Do I need containment if I already have blanking panels?

Blanking panels and containment address different problems. Blanking panels seal gaps within individual racks. Containment separates the hot and cold airstreams across entire rows. For high-density environments, both are recommended. In lower-density setups, blanking panels alone may be sufficient.